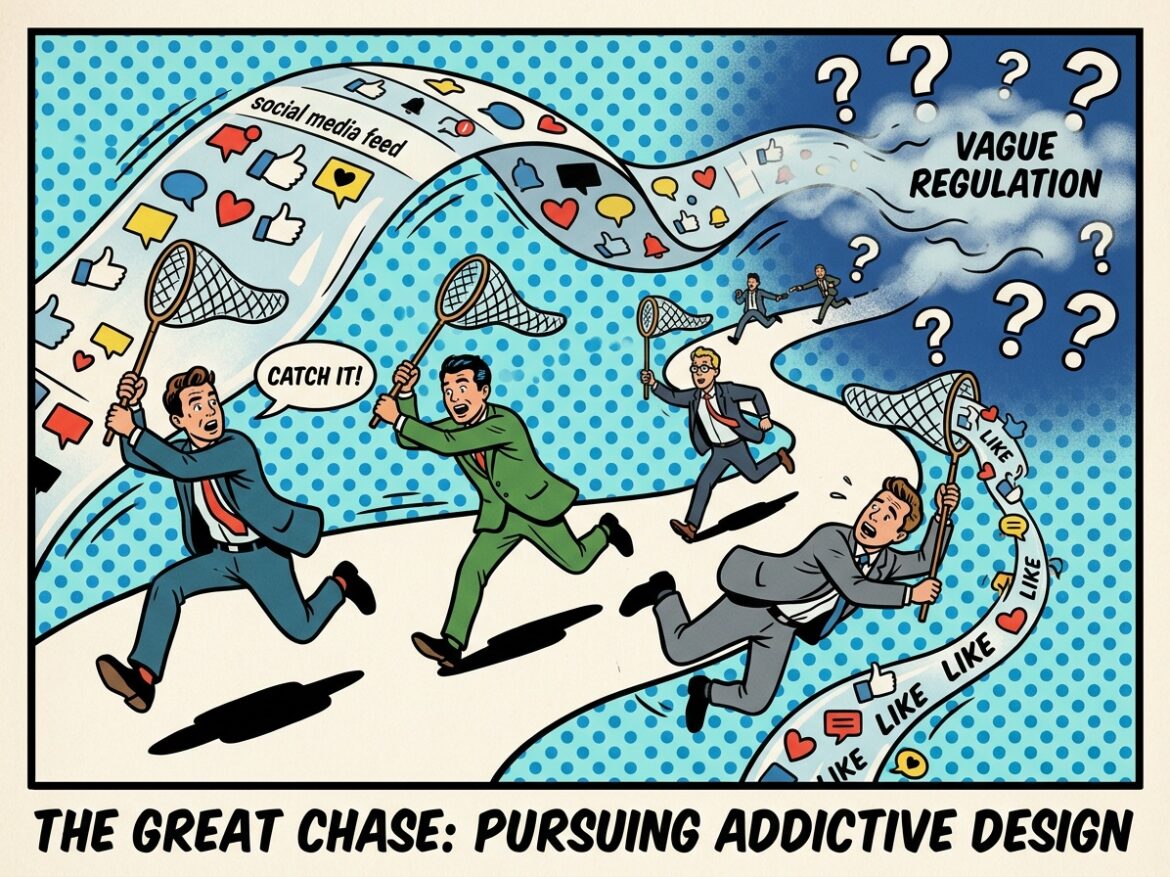

A lawsuit over infinite scroll sounds, at first blush, like a fight over product design. Make the app less sticky. Stop nudging teens to keep scrolling. Turn down the algorithmic dopamine machine.

But the harder constitutional question is whether courts can do all that through broad, after-the-fact liability standards without telling platforms what the law actually requires. The legal world is currently watching a high-stakes collision between two foundational principles: the government’s power to protect children from allegedly “addictive” technology, and the constitutional requirement that laws give regulated parties fair notice of what conduct is illegal. As the Supreme Court has explained, “we insist that laws give the person of ordinary intelligence a reasonable opportunity to know what is prohibited, so that he may act accordingly.”

That tension is now coming to a head. Appeals are likely in both New Mexico v. Meta and K.G.M. v. Meta, even as federal courts continue issuing rulings in the NetChoice litigation challenging state social-media laws, including in Arkansas. Together, these cases are exposing a basic fault line in modern tech regulation: Can courts impose liability on social-media platforms under broad “negligence” or “unfair trade practices” theories, or are those standards simply too vague to satisfy constitutional due-process requirements?

The answer appears to turn largely on how courts characterize core platform features, such as algorithmic recommendations, infinite scroll, disappearing messages, autoplay, and push notifications. Put simply, are these features protected First Amendment activity, or merely product design?

That distinction matters enormously. If courts treat these features as protected speech or editorial discretion, then laws targeting them face constitutional scrutiny. Courts must ask whether the law restricts more speech than necessary, and whether the law—or its application—is too vague for regulated parties to know when they are crossing the line.

If, by contrast, courts treat these features as mere conduct, then the First Amendment largely drops out of the analysis. Courts instead presume that businesses have adequate notice of how broad negligence or consumer-protection laws apply to platform design choices.

Scrolling Into Tort Law

Before the jury trials in New Mexico and Los Angeles, courts largely treated social-media features as conduct, rather than speech, and allowed what amounted to product-liability theories to proceed. In denying Meta’s motion for summary judgment, the California court framed the issue this way:

[T]he allegedly addictive features of Defendants’ platforms (such as endless scroll) cannot be analogized to how a publisher chooses to make a compilation of information, but rather are based on harm allegedly caused by design features that affect how Plaintiffs interact with the platforms regardless of the nature of the third-party content viewed.

That framing matters because it shifts the case away from the First Amendment and into ordinary tort law. Under California negligence law—as in most jurisdictions—a plaintiff must show that the defendant: 1) owed a duty of care, 2) breached that duty, 3) proximately caused harm, and 4) caused legally cognizable damages. In ordinary commercial relationships, businesses generally must act reasonably under the circumstances to avoid foreseeable harm.

The theory against Meta therefore sounds familiar, at least at a high level. A car manufacturer may be negligent if it designs defective brakes. Likewise, plaintiffs argue, Meta may be negligent if it designs platform features that foreseeably contribute to harms like compulsive use, self-harm, or other mental-health injuries among minors.

From this perspective, the common-law “reasonableness” standard is not impermissibly vague because American courts apply it every day. Businesses are expected to behave reasonably, even when the standard itself cannot be reduced to a precise checklist.

The same basic logic applies to unfair-trade-practices claims. To prove “unfairness” under laws like New Mexico’s, the state typically must show that a defendant’s conduct: 1) caused substantial consumer injury, 2) inflicted harms consumers could not reasonably avoid, and 3) produced harms not outweighed by countervailing benefits to consumers or competition.

Again, the analogy is straightforward. If a company places an unreasonably dangerous product into the marketplace, that may qualify as an unfair trade practice. Plaintiffs argue that Meta’s allegedly addictive design features similarly create unavoidable and substantial harms for users.

Courts traditionally give regulators and plaintiffs considerable leeway when these standards apply to conduct rather than speech. The argument, in other words, is that negligence and unfairness doctrines provide sufficient notice to businesses, even if they operate through flexible, case-by-case standards.

That distinction also shapes vagueness analysis. Courts generally apply less demanding scrutiny to civil laws regulating economic conduct than to laws burdening speech. Even courts that have pushed back on the Federal Trade Commission’s (FTC) use of Section 5 unfairness authority have still recognized that principle. In FTC v. Wyndham, for example, a federal court explained that fair-notice standards are “especially lax for civil statutes that regulate economic activities.” Under that framework, a law fails for vagueness only if it is “so vague as to be no rule or standard at all.”

The Constitution Hates Vibes-Based Liability

The analysis changes once courts conclude that speech—or at least editorial discretion—is involved. When laws burden First Amendment-protected activity, courts demand far more clarity about what conduct is prohibited.

As the Supreme Court explained in Vill. of Hoffman Ests. v. Flipside, Hoffman Ests., Inc.:

[P]erhaps the most important factor affecting the clarity that the Constitution demands of a law is whether it threatens to inhibit the exercise of constitutionally protected rights. If, for example, the law interferes with the right of free speech or of association, a more stringent vagueness test should apply.

That heightened scrutiny reflects a familiar concern in First Amendment law: vague rules chill speech. Faced with uncertain liability, speakers and publishers often self-censor rather than risk litigation.

Two recent federal decisions striking down Arkansas social-media laws at the request of NetChoice turned heavily on that principle. In both cases, the U.S. District Court for the Western District of Arkansas concluded that heightened vagueness scrutiny applied because the laws targeted platform features intertwined with speech and editorial judgment.

That makes sense. Social-media platforms necessarily organize, rank, filter, and recommend enormous amounts of user-generated content. The moment a law tells a platform how it may present speech, First Amendment questions arise.

The Arkansas court captured the problem vividly:

In a world where billions of pieces of content are posted on social media every day, social media would be functionally useless as a “vast democratic forum[ ]” if platforms were not allowed to use any algorithm—any system—for selecting and ordering content to display to users… If a social media platform was a library, banning algorithms would be roughly equivalent to requiring books be placed on shelves at random. Such a prohibition would burden users’ (or library patrons’) First Amendment rights by making it significantly more difficult to access speech a user wishes to receive, so a state probably could not constitutionally ban algorithms for the organization of speech (on social media or elsewhere) altogether…

[The law] does not prohibit all algorithms…[but it] forces covered services to restrict as to all users—minor or adult—anything that could have a forbidden effect (here, addiction) on any users—again, minor or adult.

That concern drove the court’s treatment of Arkansas Act 901, which imposed a negligence-style duty on platforms that use a “design algorithm, or feature” the platform:

[K]nows, or should know through the exercise of reasonable care, causes a user to: (1) Purchase a controlled substance; (2) Develop an eating disorder; (3) Commit or attempt to commit suicide; or (4) Develop or sustain an addiction to the social media platform.

The court concluded the law was likely “unconstitutionally vague” because it “fails to specify a standard of conduct to which platforms can conform.” Liability, the court warned, depended on “the sensitivities of some unspecified user” and on what a judge or jury later decided the platform “should have known” about those sensitivities.

In other words, ordinary negligence standards may work tolerably well for defective brakes or slippery floors. They become far murkier when applied to speech-ranking systems used by billions of people with wildly different psychological responses and preferences.

The court found Arkansas Act 900 even more problematic. Unlike Act 901’s negligence framework, Act 900 effectively imposed strict liability. It required platforms to “ensure” they:

[Do] not engage in practices to evoke any addiction or compulsive behaviors in an Arkansas user who is a minor, including without limitation through notifications, recommended content, artificial sense of accomplishment, or engagement with online bots that appear human.

The court concluded that “liability under Act 900 is even more uncertain than under Act 901.” The law extended beyond addiction to the platform itself, and imposed liability even where a company “could not have known through the exercise of reasonable care” that a feature would affect a particular child in a particular way.

That, ultimately, was the constitutional problem. The court found the statute likely void for vagueness because “[b]usinesses of ordinary intelligence cannot reliably determine what compliance requires.”

The Feed Is the Speech

The Supreme Court has already made clear that curating and presenting speech is itself protected First Amendment activity. Courts should follow the speech-based approach adopted by the federal court in Arkansas, not the conduct-based approach used by the state courts in New Mexico and Los Angeles.

In NetChoice v. Moody, the Court explained that “expressive activity includes presenting a curated compilation of speech originally created by others.” That principle applies directly to products like Instagram Feed. “[D]eciding on the third-party speech that will be included in or excluded from a compilation—and then organizing and presenting the included items—is expressive activity of its own.” The platform’s expressive product stems from Meta’s “choices about whether—and, if so, how—to convey posts.”

That last point matters. The First Amendment protects not only a platform’s decisions about what speech to display, but also how to present it.

As the International Center for Law & Economics (ICLE) argued in its amicus brief before the Massachusetts Supreme Judicial Court in litigation challenging Meta under the Commonwealth’s unfairness authority:

[I]t is clear the First Amendment protects the right of newspapers to choose not only the content it will print, but “the decisions made as to limitations on the size” of the paper. Miami Herald Publishing Co. v. Tornillo, 418 U.S. 241, 258 (1974). Just as a government couldn’t tell a newspaper to expand its size, it couldn’t tell them to use smaller font, or to reduce its margins. In other words, how a newspaper presents its content is as much part of its “editorial control and judgment” as the content itself.

It is much the same here. The Commonwealth alleges that notifications, alerts, infinite scroll, autoplay, and ephemeral content are mere conduct rather than protected editorial choices. But this simply can’t be the case.

That does not mean social-media platforms are immune from regulation. It does mean that lawsuits targeting how platforms organize and present speech—whether styled as negligence claims or consumer-protection “unfairness” actions—must satisfy the more demanding vagueness standards courts apply when speech is at stake.

Anything less would create enormous pressure for platforms to over-censor. If avoiding liability becomes the overriding imperative, companies will inevitably restrict more user speech, suppress more borderline content, and redesign their services to be blander and less engaging in order to avoid allegations of “addictive” features.

That may make platforms less interesting. It would also make online speech less free.

Protecting minors online is a legitimate and important goal. So is giving parents better tools to supervise their children’s online activity. But education, parental controls, and user-empowerment tools are far more likely to survive constitutional scrutiny—and probably more likely to work—than vague liability regimes that effectively ask courts and juries to decide when an app has become “too engaging.”

The Constitution does not require the internet to be pleasant. It does require the government to speak clearly before punishing how platforms present speech.