Markets might be able to price truth. Whether anyone wants to buy it is another question.

In a recent post, we looked at a small cluster of systems that try to use markets to correct misinformation.

Start with a simple analogy: bad information is a kind of pollution, a familiar problem in law & economics. In this case, the pollution manifests as a market failure in journalism and social media. A well-designed “truth-bounty” system could, in theory, reward those who earn public trust and capture attention by being right. That would increase the production and spread of high-quality news, while pushing misinformation to the margins.

Some of these proposals cut out authors and publishers altogether. Prediction markets and “retrodiction markets” would let anyone with a view—and some cash—bet on what’s true. Think the moon landing was faked? Buy “Yes” or “No.” Maybe the 1977 film “Capricorn One” (about a staged Mars landing) reflected conspiracy culture. Or maybe it was, depending on your priors, closer to documentary.

This is not entirely hypothetical. Financial markets already host a version of it. Certain firms do investigative work to uncover fraud and mismanagement in publicly traded companies, then take positions that pay off when the information becomes public. Short-selling ahead of the reveal can be quite profitable. That’s the “Hindenburg model,” named for Hindenburg Research, an early and prominent practitioner.

All of this sounds elegant in theory. In practice, these incentive-aligned truth machines face serious obstacles—especially when one tries to export them from finance into the messier world of politics and social discourse, where reliable information is in short supply and trust is even scarcer.

Truth Is Not Self-Executing

A core problem with “truth bounties” sits right there in the label. Everyone understands what a bounty is. Truth is another matter. Much of what drives disagreement does not sort neatly into true or false.

Statistics make the point nicely. Lies and damned lies are told with statistics [apologies]. Cancer deaths continue to rise—true—unless one uses age-standardized rates, in which case the claim becomes false. Non-falsifiable statements create even more room for mischief. “A lot of people are saying this is the best blog post ever” gestures at consensus without committing to anything testable. It’s not true, but somewhere out there may be a sufficiently large—and sufficiently obscure—group to make the statement technically defensible.

Our own research on the quality of news and commentary drives this home. We look at logical argumentation, evidentiary sufficiency, predictive accuracy, and similar markers. Much of what passes for analysis, though, is emotive content built on in-group premises. “Democracy is doomed” may resonate strongly within a shared ideological community. Analytically, it says very little.

At that point, truth and falsity never really make it onto the workbench.

Truth Markets Need More Than Theory

Any truth-bounty system will need a standard for resolving disputes. Odds are, it will look something like the “materially misleading” standard from fraud and consumer-protection law. That works fine in court. It is a heavier lift for the general public. Asking ordinary participants to apply lawyerly standards—especially in a high-conflict environment—is optimistic. Many will see a truth-bounty regime not as neutral adjudication, but as another elite project to define and enforce “fake news.”

Then comes the question of who decides. Every system needs an arbiter. Retired judges would bring credibility, but not cheaply. Subject-matter experts bring knowledge, but also baggage. Fair or not, many people already distrust them as part of the system. In a polarized setting, participants may accept dubious claims about arbiters as readily as they accept conspiracy theories about pizza parlors in Washington, D.C. And once arbiters matter, they become targets of pressure, persuasion, or worse.

Some models try to sidestep the problem by letting the market decide. In retrodiction and securities markets, participants rely on price signals. The price of a contract reflects a rough consensus about the underlying question, whether that is a contested factual claim or a company’s prospects.

That works better in finance than elsewhere. Outside financial markets, trading can be thin. Thin markets are easier to manipulate and harder to trust. There are potential fixes, but they introduce their own tensions. It would be more than a little ironic to import insider-trading rules into truth markets when the most valuable traders—the ones with the best information—are, by definition, insiders.

Thin markets also blunt incentives. Low expected returns will not draw out high-value information. As the prior post put it, “A prediction market that allows the evidence-holder to profit from the information will draw more information out faster.” True enough—but only if the rewards are meaningful. A congressional staffer who can expose her boss’ bribe-taking is not going to do it for $10,000 if it costs her job.

It is tempting to treat market-based solutions to misinformation as elegant, engineerable systems. In reality, they have to operate in a social environment that resists clean design. Truth markets would enter a society that is, in many ways, comfortable with the status quo. Not everyone is eager for a new mechanism to surface uncomfortable facts. Some will see innovation. Others will see risk, moral decay, or something worse.

When Markets Incentivize Mayhem

One standard criticism of prediction markets is that they do not just incentivize good behavior—like exposing corruption—but can also incentivize very bad behavior.

The classic example is the “assassination market.” The idea is straightforward and unsettling: someone could profit from correctly predicting a political leader’s death—and, in the extreme case, could bring that outcome about. To get ahead of this, prediction market Kalshi has adopted a policy of refusing to pay out on predictions made correct by a death. That stance drew attention from legislators and litigation from users when Kalshi declined to pay out on a contract asking whether Ayatollah Ali Khamenei would be out as Iran’s supreme leader before March 1, 2026. He was out, alright. In a body bag.

That policy addresses the most obvious edge case. It does not eliminate the broader moral overhang. These market-oriented truth mechanisms sit at an uneasy intersection of investing, journalism, and gambling. If they start to look like the sportsbook at the Circa Resort & Casino in Las Vegas, many people will not treat them as a serious response to misinformation. Add in the risk of a new class of “problem gamblers,” and public acceptance becomes even more fragile.

The law already draws a line here. “Games of skill” get more leeway, in part because they can generate social value—say, when better-informed traders improve capital allocation. “Games of chance” fare worse, at least outside their entertainment value, especially under more restrictive regulatory frameworks.

Regulators are beginning to test those boundaries. Some jurisdictions have moved to limit or prohibit wagers on granular events, such as “which team will score the next field goal.” Arizona has sued Kalshi, alleging illegal gambling and election wagering. But prohibition tends to displace, not eliminate, activity. With multiple jurisdictions and a largely borderless internet, prediction markets will migrate. Some will include unsavory topics. Others will happily take bets on the next basket in tonight’s game.

That reality points in a different direction. It is better to have most prediction-market activity in stable, lawful jurisdictions such as the United States than to push it offshore. Keeping markets domestic reduces the risk of highly liquid activity in less-regulated venues and makes oversight more feasible. Participants who place large, well-timed bets—say, on an assassination or other harmful event shortly before it occurs—often reveal themselves through their trading patterns. Investors piling into a stock just before a merger announcement tend to do the same.

You Can’t Bounty the News Cycle

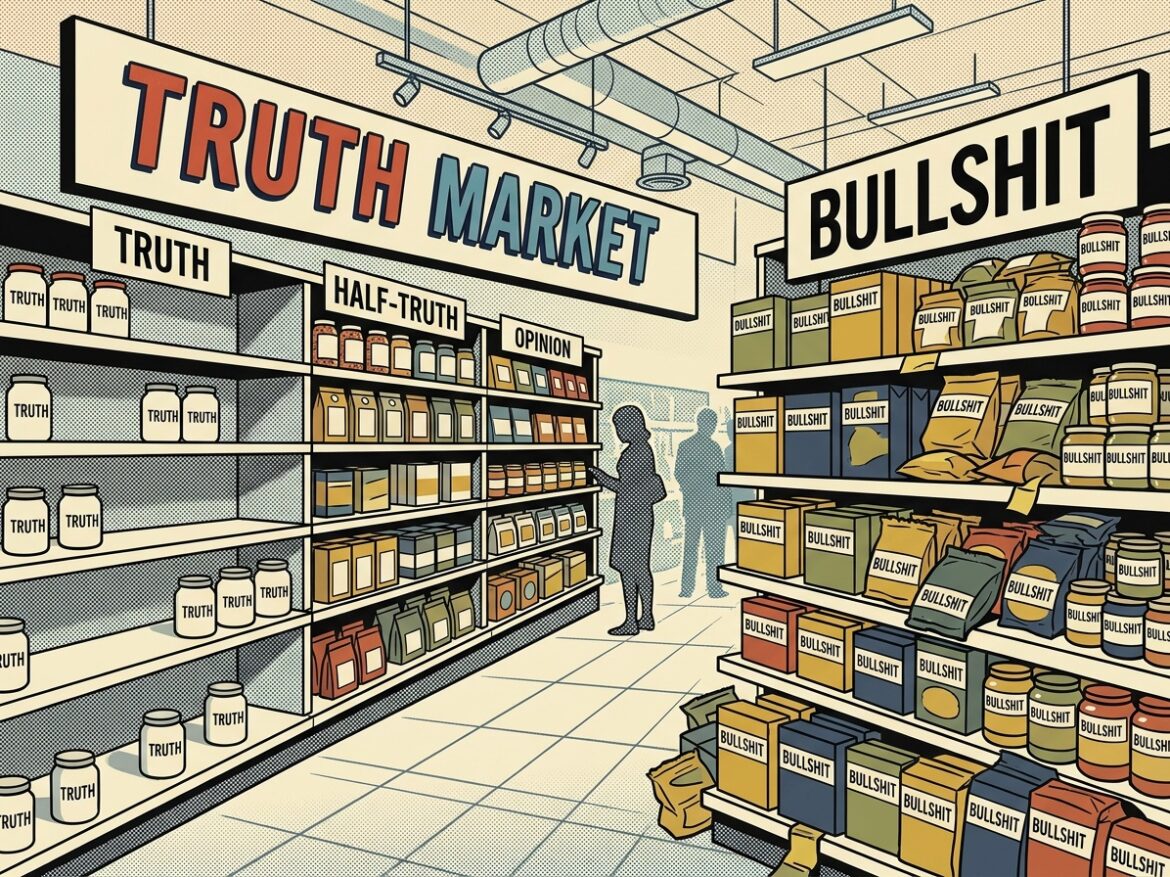

There are other social frictions. The “truth-bounty” concept treats news stories like discrete products—small, shelf-stable units, like jars of pickles, olives, or peppers.

Journalists do not see their work that way. They provide a service. A subscription buys more than the news of the day. It buys travel coverage, crossword puzzles, and weather reports. More importantly, it funds ongoing work—someone digging into corruption at the statehouse and in the boardroom, someone reporting on the lives of women in sub-Saharan Africa, someone tracking the environmental toll on Everest. Journalism is a public service, not a jar of pickles.

Even at the story level, the model does not quite fit. A news article—the “first draft of history”—is rarely final. It evolves hour by hour, day by day. Which version earns the bounty? The first? The corrected? The updated?

Do not expect journalists to rush toward truth bounties or other market-based mechanisms. The industry already faces pressure from failing subscription and advertising models. Newsrooms are shrinking. Revenues are thin. And now the proposal is to layer on additional risk—chasing bounties in a system they do not control. That is not an easy sell.

Truth Has a Demand Problem

The biggest drag on market-based solutions to misinformation may not be the mechanisms. It may be the consumers.

Much of the literature assumes that people want the truth when they consume news and information. That assumption does a lot of work. It is also, at best, incomplete.

Americans love bullshit. It is easy to blame media outlets—new entrants that ignore journalistic standards, or social media platforms that amplify clickbait and outrage. But demand matters at least as much as supply. Bullshit persists because people want it. It reinforces prior beliefs. It flatters the home team. It offers a way to snub authority.

In practice, many consumers prioritize social belonging, stability, and intellectual ease over truth-seeking.

That complicates the case for market-oriented fixes. These proposals often assume latent demand for truth just waiting to be unlocked. The reality is more nuanced—and more uncomfortable. People are not just consumers of information; they are social actors with preferences that do not map cleanly onto accuracy.

That leaves a set of open questions. Can social incentives shift behavior toward better thinking? Can truth be made “cool”? If a committed group of truth-seekers emerges, will others follow? Is “putting your money where your mouth is” enough to generate real demand for accuracy?

If you think you know the answers, there is a market for that. Place your bets.