The European Commission’s first review of the Digital Markets Act (DMA) lands at an awkward moment. Just as Brussels begins to test whether its flagship digital regulation works, AI threatens to change the game the DMA was built to police. The question is not just whether the DMA can handle AI. It is whether lawmakers built it for the right market at all.

As AI spreads, it has sharpened an old question in a new setting: How should competition policy preserve room for innovation while also addressing the anticompetitive risks new technologies can create? The pace of AI development raises an even more pointed concern. Can recently adopted ex ante rules—rules meant to prevent competitive harm before it occurs—remain “future-proof” in digital markets? The DMA did not anticipate AI’s rapid rise. It may age faster than expected.

That concern looks more urgent with the emergence of assistive and agentic AI. These tools could reshape core digital intermediation functions, including web browsing, online search, and e-commerce. Put less grandly: They may change how users find, choose, and buy things online. AI assistants and agents increasingly act as standalone interfaces, allowing users to access third-party goods, services, and content without leaving a conversational environment. That shift could reconfigure where—and how—competitive power concentrates.

This backdrop highlights a familiar tension. Competition law moves slowly, but it adapts. Its open-ended standards can evolve with changing market realities. Sector-specific regulation works differently. It can target problems quickly and directly, but it often locks in assumptions about how markets operate. When technology shifts, those assumptions can break.

The DMA illustrates the tradeoff. Despite its recent adoption, the regime already risks rapid aging. Lawmakers designed it for a digital ecosystem that AI-driven intermediation may soon transform.

Against that backdrop, the European Commission asked stakeholders whether the DMA can address AI-powered services as they roll out. It also considered whether to revise the list of core platform services—the categories of digital services covered by the DMA—and the obligations attached to them.

New AI entrants sit at the center of that inquiry. When existing gatekeepers integrate AI into their ecosystems, the DMA may capture those services. The regulation does not clearly reach new, standalone AI operators.

The report tempers expectations. So far, the Commission has taken a cautious line. It views the DMA as fit for purpose and does not propose amendments. In the Commission’s view, the regulation has proved adaptable enough to keep pace with developments like AI. Even so, its analysis focuses mainly on how existing gatekeepers deploy AI within designated core platform services.

That leaves a mixed picture. The Commission is, at once, both right and wrong.

Where the Commission Gets It Mostly Right

AI services may fall within the DMA through two main paths. First, an AI provider might itself offer a core platform service and meet the thresholds for gatekeeper designation. Second, an existing gatekeeper might embed AI features into an already designated core platform service, bringing those features within the DMA’s obligations.

On the second path, the Commission is largely right. This scenario does not pose especially novel problems for applying the DMA, as written, to AI-related markets. The familiar competition concerns remain. Gatekeepers benefit from structural advantages, including privileged access to cloud infrastructure and data. They also can integrate AI assistants and agents directly into their core platform services. In principle, the regulation’s existing provisions can address those risks.

The Commission’s recent specification proceeding against Google illustrates the point. The Commission seeks to ensure that Google gives third-party AI-service providers access to the same Android operating-system features and functionalities available to its own services. It also aims to require Google to grant third-party online search-engine operators—including AI chatbot providers that offer search functionalities—access to anonymized ranking, query, click, and view data on fair, reasonable, and non-discriminatory (FRAND) terms. At the same time, the Commission is examining whether Google’s integration of AI Overviews into Google Search complies with the DMA, particularly the ban on self-preferencing in Article 6(5).

The harder question concerns new AI entrants. The DMA may not reach them at all. Standalone AI applications do not fit neatly within any of the core platform service categories listed in the regulation. Unless those services can be folded into existing categories—such as online search engines, web browsers, or virtual assistants—providers cannot be designated as gatekeepers based solely on their AI offerings. The result may be a split regulatory landscape, where large incumbent platforms and new AI entrants face different rules.

That gap matters most for AI assistants and agents. These systems could reshape competitive dynamics and market structures in fundamental ways. They may become new gateways—ones the DMA did not anticipate—and place AI providers in a role functionally similar to gatekeepers.

When the Model Doesn’t Fit the Models

As we argue in a forthcoming paper, agentic AI should prompt a rethink of the DMA’s overall architecture.

The regulation reflects a different technological and economic moment. Lawmakers designed it around the structural features of earlier digital markets—features that helped identify both gatekeepers and core platform services. In that sense, the DMA embodies a competition-policy framework rooted in a Big Tech-centered view of digital competition. But AI may not follow the same path. Foundation models—the large AI systems that power many downstream applications—differ in important ways from the platforms that shaped that earlier paradigm.

Some familiar concerns remain. Access to key upstream inputs—computing power, data, and highly skilled labor—still matters. So do the incentives of large incumbent digital firms to fold AI into their existing products and services. Those dynamics can reinforce existing advantages.

But the downstream picture looks different. Competition in AI markets appears active and crowded. New entrants continue to emerge. Many have achieved commercial success and attracted significant investment. Barriers to entry, at least for developers, may be lower than initially feared. And the range of downstream applications suggests that foundation models will not produce uniform winner-takes-all outcomes across sectors.

The DMA, however, targets a specific problem: the gatekeeping power of vertically integrated firms that operate in a dual role. These firms control access to an important platform while also competing on that platform. Much of the DMA focuses on limiting leveraging strategies, especially self-preferencing. That logic fits traditional digital platforms. It fits less comfortably with the structure and behavior of many new AI entrants.

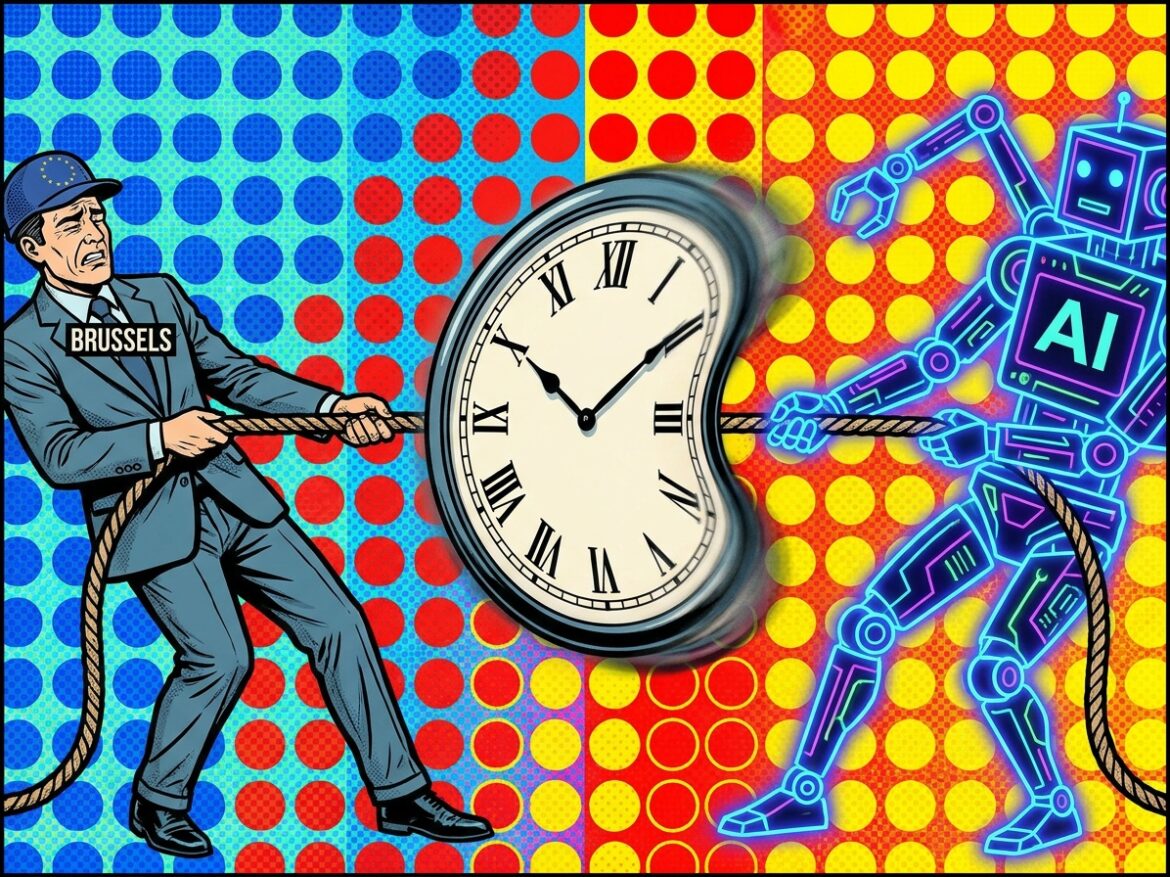

The Clock Keeps Ticking as Brussels Buys Time

In principle, the logic behind the DMA supports extending it to new AI players. The regulation rests on a simple premise: Traditional antitrust enforcement often arrives too late in digital markets, after positions have hardened and become difficult to dislodge. That concern could apply with equal force to AI, given its potential to produce new gatekeepers. Unless European policymakers intend to abandon the strategy that has defined the Brussels approach to digital markets, they will need to rely on the DMA as the primary framework governing competition in the AI era.

But that instinct runs into a real risk: acting too soon. The AI sector remains in flux. Technologies, business models, and market structures have not yet settled. Extending ex ante regulation to foundation models and AI services at this stage could constrain innovation by limiting developers’ ability to experiment with new features, design choices, and platform architectures.

It would also force policymakers to predict not just the pace, but the direction of technological change in fast-moving and uncertain markets. That is a tall order. Misjudging where market power will emerge—or how it will operate—could carry real costs for innovation, competition, and consumer welfare.

The harder question, then, is one of timing. Intervene too late, and the DMA risks irrelevance. Intervene too early, and it risks distortion.

That is what makes the first review of the DMA more than a routine stocktaking exercise. It surfaces a deeper dilemma for a regime that remains, in many respects, still young. The issue is not just whether specific provisions work as intended. It is whether AI forces policymakers to reopen the legislative framework itself.

For now, the Commission has chosen to buy time. It is sticking with its Big Tech-centered approach and continuing to monitor how AI develops. That may be prudent in the short term.

But the underlying dilemma will not wait.