The push to restrict teens’ access to social media is accelerating worldwide, even as the underlying evidence remains uncertain.

In recent years, policymakers across various jurisdictions have proposed restricting or banning minors’ access to social media platforms. Governments across a growing number of jurisdictions are considering age-based restrictions, mandatory parental-consent requirements, or outright bans for younger users. Recent global tracking indicates that at least 42 countries are considering, proposing, or implementing some form of social media age restriction. Several have already enacted or adopted legislation limiting minors’ access to online platforms. In the United States, two jury trials this week found Meta and Google liable for harms to children tied to product design, including claims that the companies failed to adequately verify users’ ages before allowing them to create profiles.

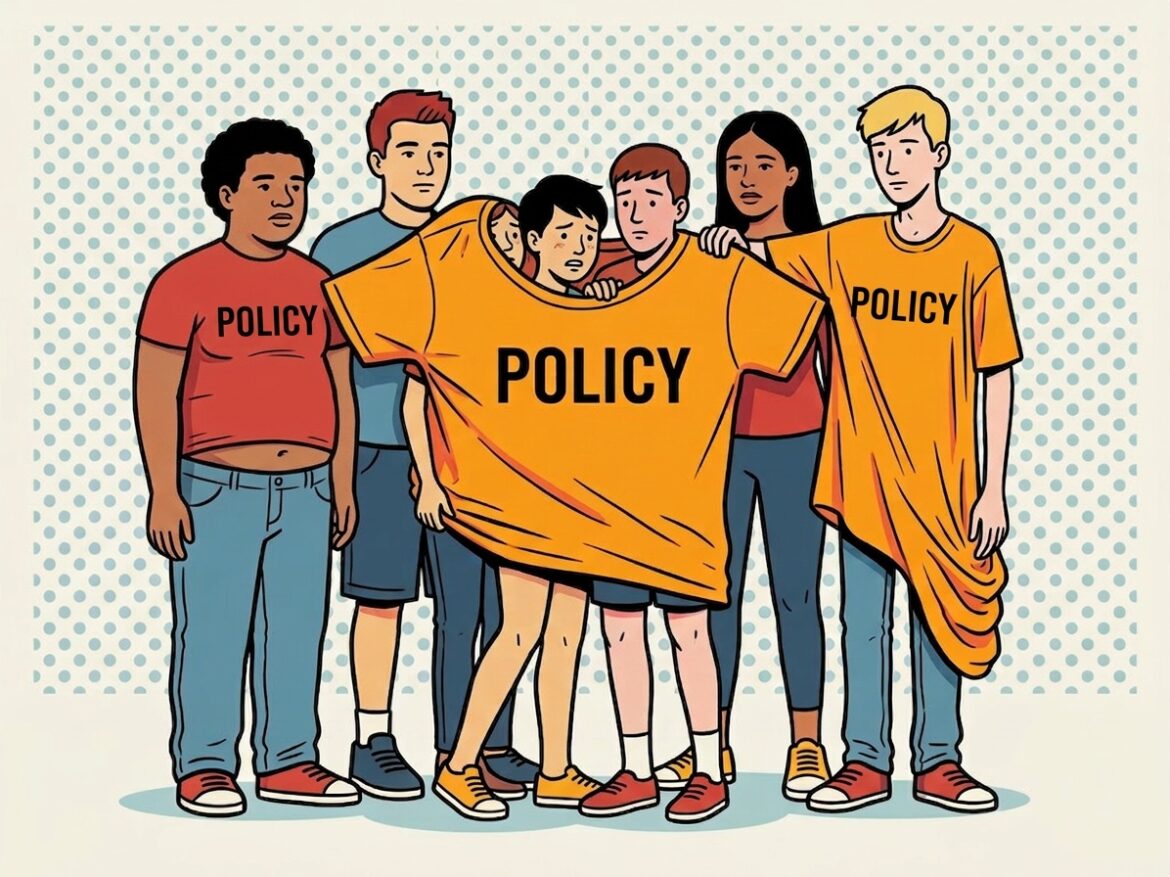

This surge in regulatory activity reflects a broader policy narrative that social media drives rising rates of adolescent anxiety and depression. As concerns about youth mental health and online safety intensify, policymakers have turned to blunt regulatory tools, including blanket bans and parental-consent regimes with strict age-verification requirements, to address perceived harms.

Despite strong political momentum, the empirical basis for broad restrictions remains contested. The causal relationship between social media use and teen mental health remains uncertain. At the same time, the rapid expansion of regulatory initiatives across jurisdictions risks creating a fragmented global policy landscape.

Anxiety, Apps, and Assumptions

Many proposals to restrict teens’ access to social media rest on the assumption that these platforms drive rising anxiety and other mental-health problems among adolescents. Media coverage often reinforces this narrative. For example, reports on proposed restrictions in Europe claim that “hours of scrolling over harmful content is rewiring young brains and causing anxiety and other health hazards, experts say, compelling European governments to act.”

The empirical evidence for a strong causal link, however, remains unsettled. Existing research identifies correlations between social media use and negative outcomes, not causation.

Adolescent mental health reflects a wide range of influences, including family environments, school-related pressure, economic conditions, and broader social changes. This complexity makes it difficult to isolate the specific effects of social media. The National Academies of Sciences, Engineering, and Medicine’s “Social Media and Adolescent Health” report concluded that “[t]he committee’s review of the literature did not support the conclusion that social media causes changes in adolescent health at the population level.”

Emerging research further suggests a more nuanced relationship between social media use and well-being. Some experts find that nonuse may also correlate with negative outcomes.

Not Just Doomscrolling

Public debate about social media and youth mental health focuses almost exclusively on potential harms. This framing overlooks the benefits these platforms provide to many adolescents. For teenagers, social media often extends their existing social lives, allowing them to stay connected with friends, share experiences, and participate in communities beyond their immediate offline environments.

Online platforms can also create connections that may not exist locally. Teenagers who feel isolated at school or in their communities may find peers online who share similar interests, identities, or experiences. In this way, social media can facilitate forms of social support that would otherwise be difficult to access. As the U.S. Surgeon General noted in a 2023 advisory, social media can enable community building and connections among peers with shared identities and interests.

Many adolescents report that these platforms help them feel more accepted, supported during difficult periods, and more connected to friends.

These benefits matter for policy. If social media generates both costs and benefits, policies that treat these platforms as inherently harmful risk ignoring their positive role in adolescents’ social lives. Proposals that focus only on harms present a one-sided approach to a more complex issue.

When ‘Kids Today’ Isn’t a Category

Research consistently finds that the effects of online platforms depend heavily on individual characteristics and context. As the American Psychological Association explains, “in most cases, the effects of social media are dependent on adolescents’ own personal and psychological characteristics and social circumstances,” as well as the specific content and features of the platforms they use.

This variation produces very different outcomes across users. Some teenagers experience harm from excessive or unhealthy use, while others benefit from social connection, access to information, or community support. Outcomes often turn on factors such as age, personality, family environment, and patterns of platform use.

The Organisation for Economic Co-operation and Development (OECD) similarly observes that vulnerability to problematic digital-media use often reflects broader personal and environmental factors outside the digital environment. As the OECD notes, “various personal and environmental factors in the non-digital world can make children more vulnerable to problematic digital media use.”

Blanket bans assume that all teenagers face similar risks and should be treated alike. The evidence suggests otherwise. Research on digital literacy shows that young people vary significantly in their ability to navigate online environments. One analysis finds that children with stronger digital skills are better equipped to manage online risks, while those with limited digital literacy face greater vulnerability.

These differences complicate the case for broad prohibitions. When outcomes vary widely, treating all young users as a single group risks restricting beneficial uses for those who navigate platforms responsibly while targeting harms experienced by a smaller subset. This heterogeneity points toward a more nuanced policy approach—particularly when considering who is best positioned to manage these risks.

Regulators Aren’t the Least-Cost Avoiders

From a law & economics perspective, variation in risk across individuals shifts the focus to identifying the “least-cost avoiders”—the parties best positioned to prevent harm at the lowest cost. In this context, teenagers—and the actors closest to their daily lives, including parents, families, schools, and community institutions—are often better positioned than distant regulators to manage risks associated with social media use.

These actors possess far more information about teenagers’ behavior, maturity, and social environments than regulators designing uniform rules. Parents can set screen-time limits, supervise platform use, and restrict certain applications based on individual circumstances. Schools and local communities can also shape norms around responsible online behavior. This informational advantage and proximity allow these institutions to identify problems early and respond in ways tailored to individual needs.

Policy debates often overlook these community-level institutions. As Mario Zúñiga observes, discussions of online harms tend to shift quickly toward government intervention while neglecting the role of families, schools, and social norms that have historically guided youth behavior. As he writes:

[T]he debate over social media harms is a case in point. Faced with new technologies that challenge traditional parenting, many have leapt to call for state intervention (bans, mandates), while neglecting the role of families, schools, and social norms, the community institutions that have historically guided youth behavior.

Courts have also recognized the importance of parental authority in this area. In NetChoice v. Griffin, the court noted that parents can regulate their children’s social media use by limiting time on platforms, restricting accessible content, and supervising online interactions. In practice, a range of tools already allows parents to monitor and manage their children’s digital activity. Social media services themselves provide parental-control tools that enable families to supervise platform use. Against this backdrop, age-verification and parental-consent requirements are, as U.S. District Court Judge Timothy L. Brooks put it, “more restrictive than policies enabling or encouraging users (or their parents) to control their own access to information, whether through user-installed devices and filters or affirmative requests to third-party companies.”

What Happens After the Ban

Even when well-intentioned, blanket bans on teenagers’ use of social media may produce unintended consequences. One concern is displacement. Restrictions may push young users toward less regulated or harder-to-monitor online spaces. Teenagers may circumvent age restrictions or migrate to alternative platforms that operate outside mainstream services. As one analysis notes, “under-16s will simply switch to other platforms that are less studied and less well-regulated.”

If activity shifts to encrypted or underground platforms, parents and educators may lose visibility into teenagers’ online behavior, making supervision more difficult. Broad prohibitions can therefore risk “making it harder for parents and educators to identify and address issues.” These measures may also alienate young users, who could view them as intrusive and dismissive of their autonomy.

Social media bans also raise concerns about access to information and participation in public discourse. For many teenagers, these platforms serve as key channels for communication, news consumption, and engagement with social and educational communities. Restricting access may limit their ability to participate in discussions that increasingly take place online.

In jurisdictions with strong free-speech protections, such as the United States, broad limits on access to communication platforms may also raise constitutional questions. Several courts have already held that parental-consent and age-verification requirements conflict with First Amendment jurisprudence. As U.S. Supreme Court Justice Brett Kavanaugh explained, “[g]iven those precedents, it is no surprise that the District Court in this case enjoined enforcement… and that seven other Federal District Courts have likewise enjoined enforcement of similar state laws.”

These proposals also present important privacy tradeoffs. Many social media bans rely on strict age-verification systems that require users to submit personal identification or other sensitive information to prove their age.

While platforms already use various methods to estimate or verify age, additional regulatory mandates may expand the collection and storage of personal data. These requirements risk creating new privacy and data-security concerns, particularly when large volumes of sensitive information are handled by private platforms or third-party verification services.

Regulation Without Borders

Governments worldwide are experimenting with policies aimed at protecting minors online, but these approaches vary significantly across jurisdictions. In the European Union, platforms operate under the Digital Services Act, which imposes obligations related to transparency, risk assessment, and protections for minors. In the United States, policymakers have taken a different approach, focusing on proposals such as the Kids Online Safety Act and a range of state-level initiatives addressing youth access to social media. Other countries are considering or implementing age-based restrictions, parental-consent requirements, or broader platform obligations.

These diverging approaches create challenges for platforms operating globally. Companies must navigate a growing patchwork of national and regional rules that differ in scope and design. Requirements for age verification, parental consent, platform design, and access restrictions may vary by jurisdiction. This fragmentation increases compliance complexity and may produce inconsistent rules governing who can access online services and under what conditions.

In response, platforms may adopt the most restrictive standards across larger markets to simplify compliance. This approach can extend stricter rules beyond the jurisdictions that enacted them. As a result, minors may face access restrictions—including removal from platforms—even in places that have not adopted such bans. In this way, blanket prohibitions in one jurisdiction can generate spillover effects that reach well beyond the regulators that imposed them.

A Blunt Tool for a Complex Problem

Proposals to ban teenagers from social media rest on a simplified narrative that online platforms are the primary driver of declining youth mental health. The empirical evidence does not support such a clear causal claim. Instead, the research points to a far more complex relationship, with outcomes that vary significantly across individuals and contexts.

Because social media generates both costs and benefits, blanket bans are a blunt tool. Treating teenagers as uniformly vulnerable ignores differences in digital literacy, family environments, and patterns of platform use. Such restrictions risk eliminating beneficial uses of online platforms while targeting harms that affect only a subset of users.

These policies also carry unintended consequences. Strict age-verification requirements raise privacy concerns. Access restrictions may push users toward less regulated online spaces that are harder for parents and educators to monitor.

If the goal is to mitigate harm, policymakers should be cautious about one-size-fits-all restrictions. Teen social media use reflects a wide range of influences that broad prohibitions cannot effectively address. A more effective approach would preserve the benefits of online participation while targeting specific harms as they arise.

Regulating social media as if all teens face the same risks may feel decisive—but it is neither precise nor effective.