The canonical story of the modern tech firm still starts in a metaphorical garage. William Hewlett and David Packard with the audio oscillator. Steve Jobs and Steve Wozniak with the Apple I. Jeff Bezos with a door-desk and a handful of mail-order books. We like the simplicity—one inventor, one widget, one market. It’s comforting. Sometimes, it’s even useful.

As a description of how value gets created at the technological frontier in 2026, it is badly outdated.

Watch Jensen Huang’s recent conversation with Dwarkesh Patel and the gap becomes obvious. Asked whether NVIDIA risks commoditization, Huang does not just answer the question—he reframes it. On its face, the concern is reasonable. NVIDIA “sends a GDS2 file to TSMC,” which fabricates the dies, packages them with high-bandwidth memory (HBM) from SK Hynix and Micron, and hands them off to a Taiwan-based original design manufacturer (ODM). Meanwhile, NVIDIA “fundamentally makes software that other people manufacture.”

The jargon is worth unpacking because it is the point. A GDS2 file is the final design file used to manufacture a chip. Taiwan Semiconductor Manufacturing Co. (TSMC) fabricates the chip dies. HBM is the fast memory stacked close to advanced processors. An ODM builds products for another company to sell under its own brand.

In other words, the “product” is not a thing NVIDIA simply makes and ships. It is a coordinated chain of design, fabrication, packaging, memory, systems, software, and deployment.

Huang’s response shifts the lens. NVIDIA’s job, he says, is to turn “electrons to tokens”—to do “as much as necessary, as little as possible.” Whatever the firm does not need to do, it pushes to partners. Whatever it does do, it structures so that everyone else can coordinate around it.

That’s the business.

NVIDIA’s moat is not the graphics processing unit (GPU). It is the coordination layer that makes hundreds of upstream and downstream actors rational in betting on its platform. That list runs long: TSMC, ASML, SK Hynix, Lumentum, Coherent, hyperscalers—the giant cloud providers that buy and operate massive computing infrastructure—artificial-intelligence (AI) labs, framework communities, application developers, financiers, and even, as Huang half-jokes, the plumbers and electricians wiring data-center buildouts.

In short, ecosystem orchestration—not discrete product innovation—is the dominant value-creation pattern in the AI economy.

That reality does not map cleanly onto the categories regulators reach for: single-product monopoly, classic two-sided “matching” platforms, or vertical foreclosure. It also cuts against policymakers’ instincts about where innovation comes from. If the underlying economic phenomenon is ecosystem coordination, analysis that fixates on discrete—and often poorly defined—“products” will miss the mark.

Get the framework wrong, and policy will not just misfire. It will penalize the coordination that drives growth.

The costs do not stay contained. Broad export controls aimed at slowing Chinese competition will also hit U.S. firms and erode the durability of American technological leadership. That tradeoff remains underweighted in a policy debate still anchored to a chip-centric view of the industry.

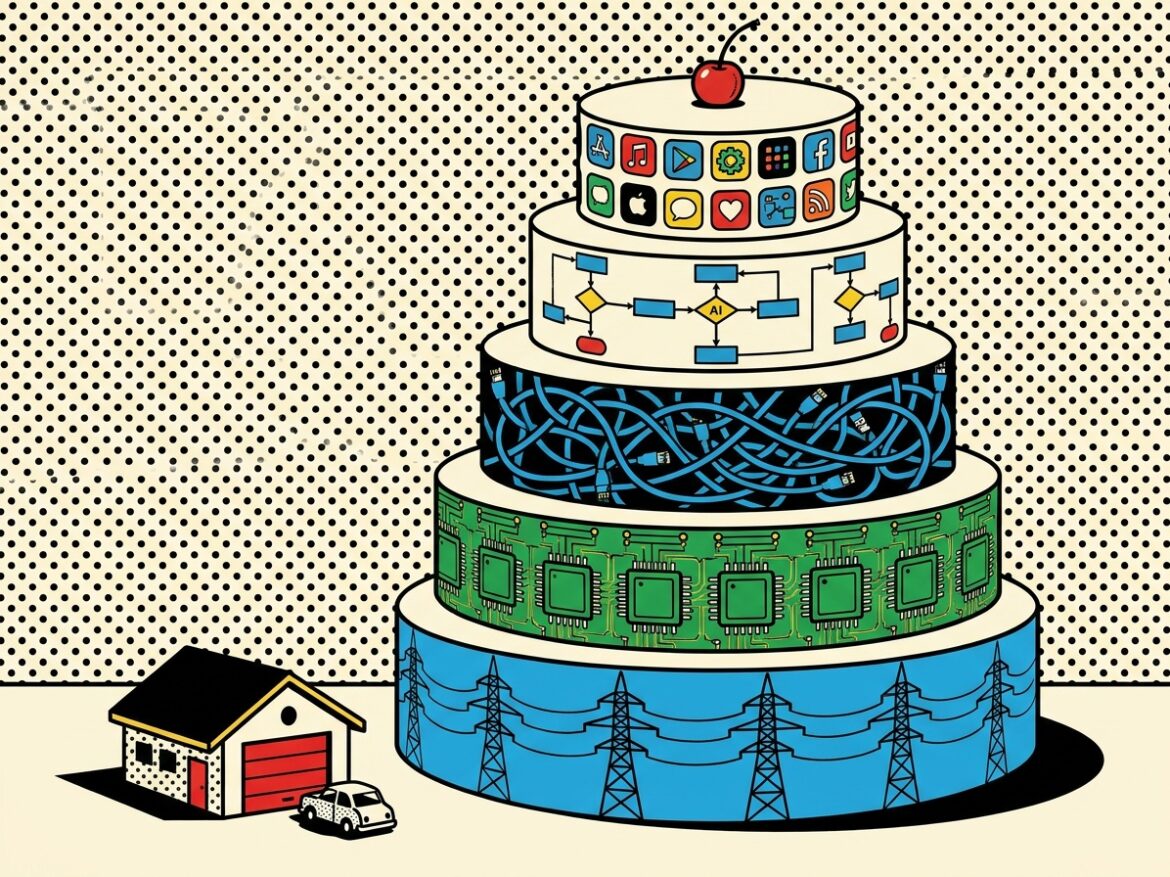

The Five-Layer Cake and the Real Business Beneath It

Huang’s mental model is that AI is “a five-layer cake.” Energy sits at the bottom—along with capital and other supply-chain inputs—followed by chips, systems and networking, the model layer, and, at the top, applications. NVIDIA’s job is not to own each layer. It is to make them mutually rational.

That framing matters for competition policy, regulation, trade policy, and national-security policy in chips and AI. Huang’s interview surfaces four mechanisms that do the work.

Start with upstream–downstream coordination as market-making. Suppliers—Micron on memory, TSMC on logic and packaging, and the silicon-photonics ecosystem on networking—invest at scale because Huang has aligned them around a roadmap they trust. Downstream demand from hyperscalers and AI-first firms makes those forecasts credible.

NVIDIA’s GPU Technology Conference (GTC), on this view, is not just a conference. It is a full-scope market-making event—“the entire universe of AI all in one place”—where “the downstream could see the upstream, the upstream could see the downstream.” The coordination function is the product.

Next is installed-base gravity. Even hyperscalers that can write their own kernels—the small pieces of code that tell processors how to perform specific computations—still want their software to run across the largest possible installed base: clouds, on-premises systems, robots, old GPUs, and new GPUs alike.

Compute Unified Device Architecture (CUDA), NVIDIA’s proprietary software platform and programming model, derives its value not just from kernel performance, but from portability across hundreds of millions of GPUs in varied environments. That is a standard platform network-effect story: the platform becomes more valuable as more users, developers, and tools build around it. The relevant question is not “whose chip is faster?” It is “whose installed base is bigger?”

A third mechanism: complementors as demand multipliers. Complementors are firms or developers whose products make another platform more useful. Much of the AI debate assumes “AI eats software”—agents replace tools, and the industry compresses. Huang argues the opposite. Agents will use Excel, Synopsys, Cadence, compilers, and design tools. That means more instances of those tools, not fewer.

Today, the number of users of Synopsys’ design compiler is capped by the number of human engineers. If agents can drive those tools, that cap moves up by orders of magnitude. AI does not displace complementors; it multiplies them. The ecosystem expands.

The same logic extends to labor markets. When AI complements tools rather than replaces them, demand rises for both the tools and the workers who use them. That aligns with early empirical evidence on AI’s labor effects and cuts against the zero-sum displacement story (see here and here).

Finally, roadmap credibility becomes part of the product. Blackwell, Rubin, Rubin Ultra, and Feynman—NVIDIA’s successive GPU architectures and system platforms, announced on a predictable cadence to signal future performance and capability—anchor expectations across the ecosystem. Customers planning $100 billion AI factories need a supplier they can plan around. Predictability is a feature. “I can completely depend on them,” Huang says of TSMC. A credible roadmap makes upstream and downstream coordination self-reinforcing.

Put it together and NVIDIA’s market power, if that is the right term, is not “we make the best GPU.” It is “we make it rational for adjacent actors to coordinate around us.” That is a stronger—and more demanding—claim than CUDA lock-in or GPU path dependence.

In that sense, NVIDIA looks less like a traditional manufacturer and more like an ecosystem orchestrator. It resembles what Michael Cusumano, Annabelle Gawer, and David Yoffie describe as “platform leadership,” and what Marco Iansiti and Roy Levien call a “keystone.” It also tracks Alden Abbott’s observation that “firms that look like rivals in one dimension may act as complementors in another, and a static antitrust lens can easily misread value-creating coordination as competitive harm.”

This framing carries implications.

First, a two-sided-market analysis fixated on price differences across sides will miss the action. The relevant unit is the coordination surface connecting many specialized actors. Conduct that looks like self-preferencing or vertical foreclosure in a single-product frame often functions, in an ecosystem frame, as the set of hooks that makes complementor investment rational. Blanket bans on self-preferencing risk depressing integration and chilling that investment.

The depth of the moat depends as much on complementor switching costs as on user switching costs—and both may be lower than they first appear.

More broadly, the regulatory, trade, industrial-policy, and national-security stakes do not reduce to any single layer of the stack. If the relevant economic unit is the ecosystem, policy that targets component shares, static market structure, or bilateral firm conduct risks aiming at the wrong object.

Antitrust can mistake coordination for exclusion. Trade policy can weaken the installed base, developer mindshare, and complementor density that sustain U.S. advantage. Industrial policy can overfocus on fabs—semiconductor fabrication plants—while underinvesting in standards, software, and downstream adoption. National-security policy can restrict hardware flows while conceding the broader ecosystem through which technological leadership reproduces itself.

The throughline is simple: Winning the chip layer is not the same as winning the system. The same holds for policy that tries to control it.

Models Compete, Ecosystems Compound

The pattern shows up clearly in a current example: Anthropic’s strategy around Claude is, at bottom, an ecosystem play.

Claude Code turns the model into a substrate that third parties extend through hooks, Model Context Protocol (MCP) servers, slash commands, and plugins. Cowork applies the same logic to non-developer knowledge work, offering a desktop layer where third-party plugins, skills, and connectors compose Claude into task-specific workflows—legal review, marketing analytics, financial modeling, and more.

MCP functions as an application programming interface (API) standard that lets this ecosystem scale without Anthropic building every integration itself.

The economics are straightforward. Each plugin, skill, and connector represents a specialized investment by an outside firm. Each one makes Claude more valuable for a particular use case. That raises users’ willingness to pay, which drives API usage, which, in turn, justifies more complementor investment.

That feedback loop—not any single feature—is what makes the position durable.

Against Claude’s recent gains, OpenAI is now visibly playing catch-up on this dimension. Not on raw model quality—where leadership shifts quarter to quarter—but on the complementor stack: custom GPTs, an apps platform, Operator, the ChatGPT enterprise tier, and Codex’s developer ergonomics.

Codex targets developers directly. At the same time, its streamlined modes suggest a push toward non-coding use cases—much as Cowork does.

This is exactly what Jensen Huang means when he warns that “ecosystems are hard to replace. It costs an enormous amount of time and energy.” In pure technological terms, OpenAI is not far behind Anthropic. But that is not the margin that decides the outcome.

The edge goes to whoever builds the largest installed base and the deepest set of third-party integrations that make users’ lives easier.

Competition, in other words, does not hinge on the model alone. It plays out across the ecosystem—and it is both intense and multidimensional.

A National Symbol Isn’t an Ecosystem

The clearest live case for the ecosystem framing—and the one with the highest policy stakes—is China’s own DeepSeek.

DeepSeek V4 launched April 23, 2026. In its technical report, the team concedes the model sits “3 to 6 months behind” the closed-source frontier. Outside observers suspect the gap is wider. CEO Liang Wenfeng framed the company’s mission around “hardcore research,” not products. He criticized Chinese tech for “freeriding on Moore’s Law” and positioned DeepSeek as a contributor to foundational innovation rather than an applications company.

It was an earnest—and widely admired—vision.

It was also costly.

DeepSeek lost core talent to Tencent, ByteDance, Xiaomi, and DeepRoute.ai across large language models (LLMs), agents, optical character recognition (OCR), and multimodal systems, which process more than one kind of input, such as text and images. It missed China’s consumer-AI inflection point, as ByteDance’s Doubao became the most-downloaded chatbot, Alibaba’s Afu broke through in health, and firms like MiniMax and Z.ai moved toward public markets.

Operationally, it stumbled. A major training-run failure in mid-2025 hit as the firm migrated from NVIDIA to Huawei Ascend. By April 2026, DeepSeek had opened to external financing, no longer able to self-fund frontier-scale training. A Qwen employee, quoted in 36Kr, captured the shift: “the golden age of nonprofit AI development is over.”

In an ecosystem-driven economy, “failure to develop a vision of commercialization” is another way of saying “failure to build a compelling ecosystem.” Commercialization is not just revenue. It is how a frontier lab secures distribution, capital, complementor relationships, and the feedback loops that fund the next training run—and the next architectural bet.

DeepSeek did not need to become a marketplace. It needed to become an orchestrator: a substrate around which Chinese hyperscalers, application developers, chip vendors (Huawei Ascend, Cambricon, Biren), and end users could coordinate.

That role did not stay vacant. ByteDance (Doubao), Alibaba (Qwen), and increasingly closed-source Chinese labs filled it. DeepSeek became a national symbol without an industrial ecosystem.

The episode also underscores a broader point: Multiple ecosystems operate simultaneously at different layers of the stack. NVIDIA is one orchestration layer. So are firms higher up the stack. Each integrates across layers; none operates in isolation.

Huang flags this dynamic in both directions. Forcing U.S. firms out of China, he warns, risks backfiring: “if we are forced to leave China, it would be a policy mistake … it [will] accelerate their chip industry and force all of their AI ecosystem to focus on their internal architectures.”

The ecosystem argument cuts both ways. The failure of a Chinese lab to commercialize creates an opening for U.S.-aligned ecosystems. Policy that closes that window does so at a cost.

Closing the Window on Ourselves

Competition policy already understands ecosystem dynamics. Trade and national-security policymakers need to catch up.

U.S. policy on advanced-AI export controls has, by design, tried to shut the window through which American ecosystems might win. The logic is intuitive: restrict the flow of frontier accelerators—and the extreme ultraviolet (EUV) equipment used to produce them—to the People’s Republic of China (PRC), widen the capability gap, and give U.S. labs time to reach dangerous thresholds first and harden systems before adversaries catch up.

Run that logic through an ecosystem lens—and against the empirical record Huang describes—and two problems emerge. Neither is the one the policy is meant to solve.

First, the regime struggles on its own terms. Huang’s central move is to dissolve the chip-bottleneck premise. At the lowest layers of the stack, energy and chips function as partial substitutes. Where power is abundant, less-advanced chips can still deliver effective compute at scale.

The PRC has built massive excess generation capacity—“data centers that are sitting completely empty, fully powered… ghost cities… ghost data centers”—and is willing to absorb environmental, fiscal, and political costs U.S. operators will not. Under those conditions, “seven-nanometer chips are essentially Hopper… today’s models are largely trained on Hopper.” Huawei has shipped millions of accelerators, and its Ascend roadmap—now including FP4 support on the 950 family—is narrowing the per-chip performance gap export controls were meant to lock in. (FP4 refers to a very low-precision number format that can make AI computation cheaper and faster when models can tolerate the tradeoff.)

AI, as Huang puts it, is “a parallel computing problem.” More lower-end chips, paired with cheap power, can substitute for fewer high-end ones.

A second point follows. Frontier capability now turns more on algorithmic and architectural advances than on transistor scaling. Moore’s Law contributes roughly 25% annually; computer-science gains deliver order-of-magnitude improvements per generation. The International Center for Law & Economics (ICLE) has made a similar point about the limits of static performance metrics.

Roughly half of the world’s AI researchers are Chinese. The key levers—Mixture-of-Experts architectures, attention variants, low-precision numerics, and distributed training—are not export-controllable. Mixture-of-Experts models route different tasks to specialized parts of a model, making training and inference more efficient. Distributed training spreads computation across many chips or machines.

DeepSeek V4 provides a clean proof of concept. Its kernels no longer rely purely on CUDA, instead targeting a portable domain-specific language called TileLang. (A domain-specific language is a programming language designed for a narrow technical purpose.) Its post-training and inference pipelines use MXFP4 precision, another low-precision format, to run across heterogeneous chips—that is, different types of chips used together. Its kernel already runs on Huawei Ascend supernodes.

On this margin, the export regime is accelerating, not slowing, the indigenization of the Chinese stack.

The Mythos example sharpens the calibration problem. Huang notes that Mythos was trained on “fairly mundane capacity and a fairly mundane amount of it.” The cyber-offensive capabilities that motivate chip controls are not tightly gated by marginal access to frontier accelerators.

If the concern is models like Mythos, the relevant lever is the capability layer, not the chip layer. That means restrictions on releasing models with materially dual-use capabilities—models useful for both civilian and harmful purposes—combined with controls on remote access, something akin to know-your-customer (KYC) rules for APIs.

Anthropic’s decision to withhold Mythos from all users—not just Chinese ones—until vulnerabilities were patched offers a real-world example of capability-layer governance. ICLE’s comments to the National Telecommunications and Information Administration (NTIA) on dual-use foundation models and misuse risk sketch out the doctrinal framework. A regime that overweights chip controls while underinvesting in capability controls is mismatched to its own threat model.

The second problem is more structural: The regime cedes the ecosystem layer to PRC-centered stacks.

Ecosystem analysis makes this visible in a way component-level analysis cannot. Durable advantage in AI comes from orchestrating capital, complementors, developers, standards, and customers around a credible roadmap. Export controls that wall U.S. firms out of the world’s second-largest computing market undercut that ecosystem across all of those margins.

Huang is explicit: “50% of the AI developers are in China. We don’t, we shouldn’t, the United States should not give that up.” And: “China is the largest contributor to open source software in the world… the largest contributor to open models in the world… today, it’s built on the American tech stack, NVIDIA.”

The question is not whether China reaches the frontier. It is which stack the global developer base optimizes for along the way.

If U.S. policy forces Chinese developers to build on domestic stacks—because they lack access to U.S. alternatives—those optimizations will propagate outward. As Chinese platforms mature, they diffuse into the Global South, the Middle East, Southeast Asia, and parts of Europe. Huang puts the endgame plainly: “AI models around the world… run best on not American hardware.”

That is the ecosystem-fragmentation cost the current regime imposes on its own intended beneficiaries.

The takeaway is not to abandon controls. It is to target the right layer.

An ecosystem-aware export-control regime would shift weight from chip-layer to capability-layer interventions. It would treat developer mindshare and framework standards as first-order policy concerns. It would recalibrate the Committee on Foreign Investment in the United States (CFIUS), the Bureau of Industry and Security (BIS), and outbound-investment regimes to reflect how ecosystems actually form—through partnerships, equity-and-compute deals, and standards bodies—not just through component flows.

This is not a call to “open the doors.” It is what follows from taking both the empirical and theoretical records seriously.

Coordination Eats the Widget

The garage-and-widget story always understated the roles of standards, capital markets, supplier coordination, and complementor mobilization. In an AI economy built on five-layer stacks, it is no longer even a useful approximation. The firms that lift the system are the ones that make it rational for everyone else to coordinate around them—and that means absorbing coordination costs the rest of the ecosystem would otherwise bear.

For competition policy, the question is not “is this firm dominant?” It is “is the ecosystem contestable, and does the orchestrator face enough discipline to keep it honest?”

For U.S.–China strategy, DeepSeek does not show that China cannot reach the frontier. It shows that reaching the frontier is not enough. The real contest runs through developer mindshare, capital flows, complementor density, and standards. Policies that concede those layers in the name of locking down the chip layer will miss their target—and, as Huang warns, risk achieving the opposite.

The firms that matter are no longer those with the cleverest widget. They are the ones that make every adjacent actor’s bet rational—“as much as necessary, as little as possible.”

That is the game now.