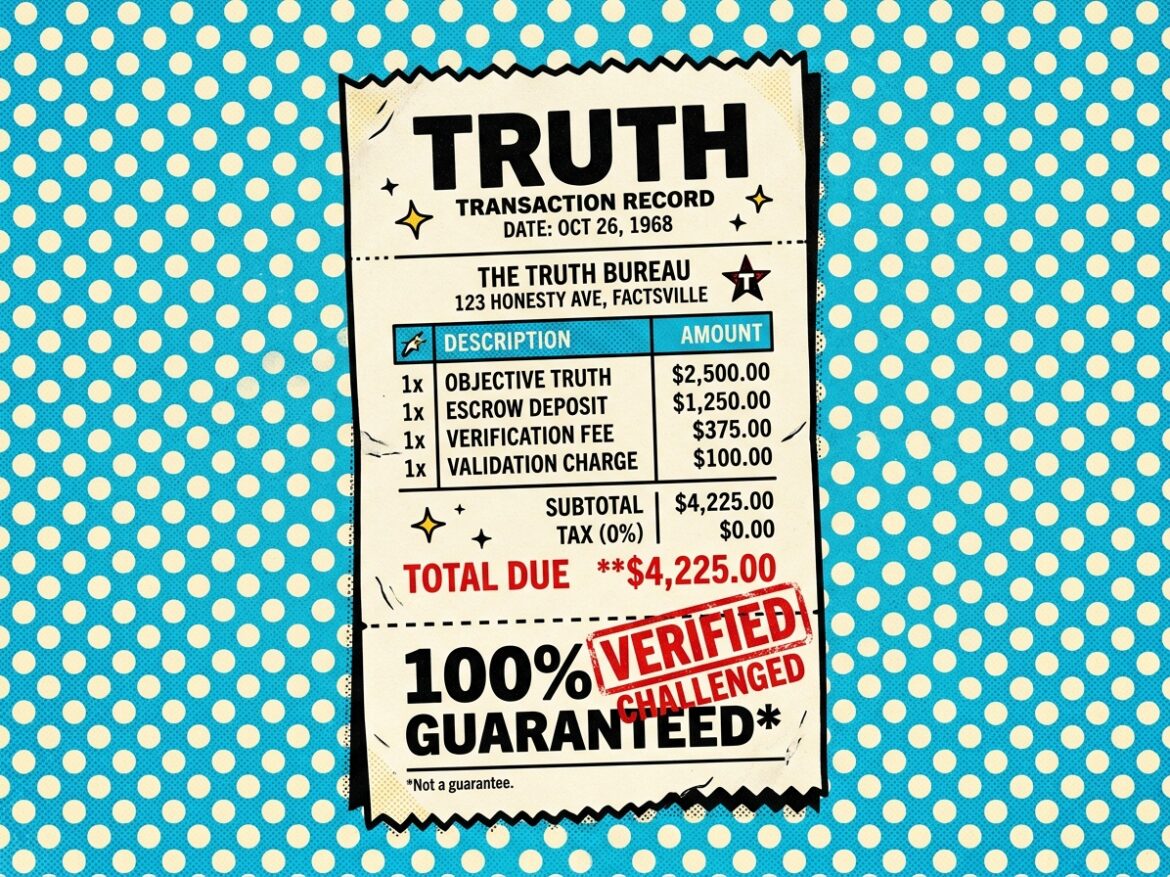

In a seventh-season episode of The Simpsons, Bart tunes in to the Impulse Buying Network and spends $350 on an animation cel from The Itchy & Scratchy Show. As part of the pitch, an IBN huckster proclaims: “Each one is absolutely positively 100% guaranteed to increase in value!” An immediate disclaimer follows: “not a guarantee.” The joke lands on a familiar premise—guarantees aren’t real.

But they are real. Evidence shows guarantees can signal quality and encourage transactions. Firms that guarantee products or services often see substantial gains in business.

At a recent conference in Boston, we examined proposals for “truth guarantees” and similar market-oriented approaches aimed at mitigating “misinformation.” Guaranteeing truth could improve content quality, increase trust in journalism, and bolster the reliability of social media—especially if platforms boost guaranteed items. These proposals, however, face several challenges.

Putting a Price on Truth

Boston University Professor Marshall Van Alstyne has conducted extensive research on addressing misinformation by warranting the validity of claims. He analogizes misinformation to pollution. Just as air pollution contributes to lung disease, bad information produces externalities—insurrectionist activity, vaccine resistance, the assassination of health insurance CEOs, and other forms of social disorder.

The standard responses to externalities are familiar. In the information context, however, speech values and the First Amendment sharply—and appropriately—limit them. Direct government regulation of communications is off the table; it amounts to censorship. Pigouvian taxation fares little better. Imposing costs on producers of misinformation to compensate for harm is a content-based burden on speech that would likely fail constitutional scrutiny. In both cases, empowering a central authority to determine truth is poor policy and likely to deepen distrust.

Ronald Coase offers an alternative. A better-functioning market could enable trades that reduce misinformation or internalize its costs.

What scarce resource anchors such a market? Van Alstyne points to attention. Information consumes attention. A more efficient market for attention should improve information quality, reduce attention devoted to bad information, and mitigate associated externalities.

Free-speech norms still apply. Listeners—those who supply attention—must remain free to choose their sources. They may use that freedom to remain in filter bubbles.

Speakers, however, want to be heard. They want to penetrate those bubbles.

The question, then, is how to bring speakers and listeners together on better terms. How can incentives shift so that speakers share—and listeners engage with—more truthful content?

Research suggests modest interventions help. Prompting social media users to consider truth and falsity reduces the sharing of false information. Requiring users to certify that shared content is true reduces falsity further. These measures, however, also slightly reduce the sharing of true information.

Van Alstyne proposes a contractual right to accurate information. Speakers—here, authors—would post a “truth bounty,” effectively placing funds in escrow to back their claims. If a claim proves true, the speaker recovers the bounty after reaching audiences they might not otherwise reach. If the claim proves false, the speaker forfeits the escrow. The system raises the cost of spreading false information.

His research suggests this approach works. When speakers face rewards for truth and penalties for falsity, they share more accurate information. When listeners see that a speaker has warranted a claim, they tend to trust it more.

In “Certifiably True: The Impact of Self-Certification on Misinformation,” Van Alstyne and his co-authors find that engagement increases when truth bounties are available—more information gets shared. Lowering the cost of truth and raising the cost of falsehood yields more accurate content. Unlike efforts to cajole or coerce speakers or platforms, truth guarantees align with both the spirit and the letter of free speech.

This approach is not a cure-all. It will not eliminate uncertified false information. And when speakers falsely warrant claims, listeners may initially find those claims more credible—at least until they are challenged. Still, the results are promising and intuitively sound.

Skin in the Game—or Don’t Play

Van Alstyne is not alone in developing this approach. Yonathan Arbel of the University of Alabama School of Law and Michael Gilbert of the University of Virginia School of Law propose a contractual mechanism that allows speakers—individuals, media outlets, and others—to signal credibility through financial commitments.

In “Truth Bounties: A Market Solution to Fake News,” Arbel and Gilbert lay out a detailed framework for guaranteeing truth. They address the full lifecycle: staking money on a claim’s accuracy, contesting claims, arbitrating disputes, and resolving outcomes. Their core insight is simple: “Having something to lose … signals to consumers that [speakers] tell the truth.”

Their proposal engages closely with institutional design, including how challenges should work.

Challenges could proceed in different ways, but for communications on the internet, a straightforward way would involve clicking on [an] icon. Doing so could bring challengers to a website, where information on the story—title, date, publisher, author—would load automatically, allowing the challenger to pursue her complaint. The challenge window would remain open for a set duration, similar to a statute of limitations—for example, one year.

…Whether out of malice or ignorance, people could clog the system with meritless challenges. To mitigate this problem, the system could charge a challenge fee. The fee would force the challenger to put skin in the game. Like court fees, paying the challenge fee signals that the challenger has confidence in the merits of her claim.

Arbel and Gilbert recognize the familiar tradeoff. Fees must deter weak claims without blocking strong ones.

A small fee would fail to screen out meritless challenges, but a large fee could block even meritorious ones. One approach would be to set the challenge fee as a single-digit % of the bounty, subject to a minimum. In any event, experience would inform the optimal amount.

Betting Against the Tin Foil Hat

Prospective guarantees are not the only path. Enrique Guerra-Pujol of the University of Central Florida proposes an ex post approach. In “Betting on Conspiracy Theories: Kurt Gödel and ‘The Leibniz Cover-Up,’” he suggests that communities—whether or not speakers participate—can sort truth from falsity through modified prediction markets.

The paper ranges widely, drawing on Michel Foucault, Richard Dawkins, and others. Guerra-Pujol uses logician Kurt Gödel’s fixation on an unlikely conspiracy against Gottfried Leibniz as a starting point for a broader method to discipline conspiratorial thinking. The core idea will sound familiar: put skin in the game.

[I]nstead of trying to censor or suppress conspiracy theories, why not allow people to bet on them? A betting market would aggregate all available information about the truth-values of various conspiracy theories by allowing bettors to bet on their beliefs about past events.

Guerra-Pujol sketches a “retrodiction market” in which participants buy and sell contracts tied to specific conspiracy theories. Each contract presents a binary outcome: true (T) or false (F). Buying either contract amounts to a wager on the correct answer.

[I]f some bettors believe that Lee Harvey Oswald was part of a conspiracy or that 9/11 was an inside job, they could buy T contracts on these topics, and conversely, if other bettors think that Oswald indeed acted alone or that 9/11 was not an inside job, bettors could buy F contracts. The prices of these bets would then be based on supply on demand, depending on how many bettors buy T or F contracts. If more bettors believe Oswald was part of a conspiracy, the price of the T contract will rise, while if more bettors believe Oswald acted alone, the price of the F contract will rise. Furthermore, the more participants or bets there are, the more likely that prices will reflect the true probabilities of the various conspiracy theories being bet on.

These markets would do more than aggregate beliefs. They would create incentives to surface and promote evidence. Participants with money at stake would work to persuade others and uncover facts that support their positions. In a sufficiently liquid market, with real stakes on the line, even long-disputed questions—such as who killed John F. Kennedy—might converge toward clearer answers.

Markets Don’t Lie—They Pay

Does Guerra-Pujol ask us to imagine things that never were and wonder why not? [apologies] Hardly. A version of the retrodiction market already exists.

Hindenburg Research combined investigative journalism with short selling. The firm took positions against companies based on research into alleged fraud or mismanagement, then publicized its findings to regulators and the press. As stock prices fell, Hindenburg profited. When the firm shut down in early 2025, founder Nate Anderson credited its work with contributing to nearly 100 civil or criminal charges “including billionaires and oligarchs. We shook some empires that we felt needed shaking.”

Again, money where one’s mouth is creates incentives to uncover and disseminate truth. Successor firms have emerged. The information environment improves.

Guerra-Pujol’s retrodiction markets aim to do something similar for conspiracy theories and false beliefs. The Hindenburg model shows how financial incentives can discipline corporate misconduct. Prediction markets—such as Polymarket and Kalshi—extend the same logic to other domains.

Consider a hypothetical. An investigator or congressional staffer uncovers clear evidence that a member of Congress accepted bribes. A prediction market that allows the evidence-holder to profit would accelerate disclosure. “Will Congressman Crooked serve out his term?” When self-interest aligns with accurate information, the result can be faster revelation and broader dissemination. The potential gains are significant—reducing the externalities that information asymmetries create across many domains.

Truth bounties, retrodiction markets, and the Hindenburg model all rely on the same mechanism: markets that reward accuracy and penalize error. They are absolutely positively 100% guaranteed to work.*

*Not a guarantee.

In a forthcoming post, we will examine the challenges these approaches face.