A pair of jury verdicts last week, along with a quiet settlement, may mark a turning point for the American internet—and not one that favors free expression.

For years, digital platforms have relied on two core protections: the First Amendment and Section 230. Together, they let companies host, organize, and moderate speech without facing crushing liability. In a 48-hour span, that foundation took a hit.

A New Mexico jury delivered a $375 million verdict tied to child safety claims against Meta. A California jury found Meta and Google (YouTube) liable for allegedly addictive design features. Meanwhile, Missouri v. Biden—the case over government pressure on social media—ended in a settlement that promises restraint but sets little precedent.

Taken together, these developments push platforms toward tighter speech controls. They also showcase a rising legal strategy: recasting editorial decisions as defective-product design.

At the same time, the Missouri v. Biden settlement leaves largely intact the government’s ability to pressure platforms behind the scenes. Little now prevents government officials—or even private litigants—from pushing social media companies to significantly reduce speech without any formal legislative or regulatory action.

If Speech Is a Product, Everything Is a Defect

The juries in Santa Fe and Los Angeles reached a similar conclusion. They treated Meta’s and Google’s platforms not as forums for speech, but as engineered products capable of causing harm. That shift reframes how courts evaluate platform behavior—and raises a threshold question: when does it make sense to treat online speech platforms as products?

From a law & economics perspective, intermediary liability can make sense when the intermediary is the least-cost avoider of harm. Meta, for example, may be well-positioned to protect minors if it can monitor and control harms at relatively low cost. Even then, the analysis doesn’t end there. Courts must still ask whether imposing liability produces greater social costs than it prevents. In the online-speech context, that means weighing accountability against the risk of collateral censorship, as platforms restrict speech to avoid liability.

Juries can and should resolve questions of fact. Judges, by contrast, must get the law right. Here, that means asking how consumer-protection or product-liability theories apply in light of the First Amendment and Section 230. It is not clear the courts in New Mexico and California did so.

In New Mexico, the court sidestepped Section 230 by focusing on Meta’s conduct rather than the effects of applying consumer-protection law to user-generated content. The case turned on allegations that the company failed to prevent child exploitation and misled users about safety. The jury found those actions violated the state’s Unfair Practices Act.

In California, jurors were instructed to ignore content altogether. They focused instead on features such as infinite scroll and autoplay. By isolating design from content, the court treated the algorithm as a product mechanism, rather than an editorial tool.

That distinction doesn’t hold. Decisions about how to present content—what to prioritize and how to display it—are editorial judgments. Courts have long recognized that the First Amendment and Section 230 protect not just what is said, but how it is presented.

A newspaper chooses placement, headlines, and story order to capture attention. Platforms do the same with feeds, notifications, and recommendations. Labeling those choices “addictive design” does not strip them of their expressive character; it attempts to sidestep the First Amendment. Section 230 likewise protects not just the conveyance of information, but its display. Section 230(f)(4) expressly contemplates tools that “filter, screen, allow, or disallow content… pick, choose, analyze, or digest content… or transmit, receive, display, forward, cache, search, subset, organize, reorganize, or translate content.”

The International Center for Law & Economics (ICLE) has warned courts against this sleight of hand in its amicus brief to the Supreme Court of Massachusetts on similar claims. When plaintiffs repackage speech decisions as product defects, they invite courts to regulate expression through consumer-protection or tort law—weakening constitutional protections without confronting them directly.

The logic extends beyond presentation. Claims that platforms acted negligently by failing to verify users’ ages clash with federal rulings striking down age-verification mandates. Courts should not allow common-law claims to impose what statutes cannot.

Jawboning Without Consequences

While the Meta verdicts target design, Missouri v. Biden centers on government influence. The case alleged that federal officials pressured platforms to suppress disfavored views on COVID-19 and elections. The U.S. Supreme Court never reached the merits, instead finding the plaintiffs lacked standing. Even so, the settlement signals that the government’s pressure campaign crossed a line—despite the absence of a definitive judicial ruling.

That acknowledgment matters. Government threats can distort the marketplace of ideas and produce government failure worse than the market failure they purport to fix. Even well-intentioned efforts can turn coercive when backed by regulatory authority.

The settlement, however, is narrow. Only the parties can enforce it, and only against the federal agencies originally sued. Missouri and Louisiana may act only when pressure affects their own official speech—not the speech of their citizens. The agreement creates no precedent for others, including those facing pressure from different federal agencies or from state and local officials.

The broader dynamic remains intact. Future administrations can continue to test the limits of informal pressure. If the jury verdicts stand, they introduce another background regulatory threat—one that state officials can use to push for changes without public scrutiny. Platforms, wary of investigations and the risk of ruinous consumer-protection suits, may comply. Private litigants, too, can wield the threat of litigation to force changes in platform behavior.

Large incumbents like Meta and Google may absorb these costs—paying judgments and scaling up content-moderation teams. Smaller platforms, and potential entrants, likely cannot. The result could be perverse: a legal regime that entrenches dominant firms by making it harder for competitors to survive.

The ‘Safe’ Internet Is a Smaller Internet

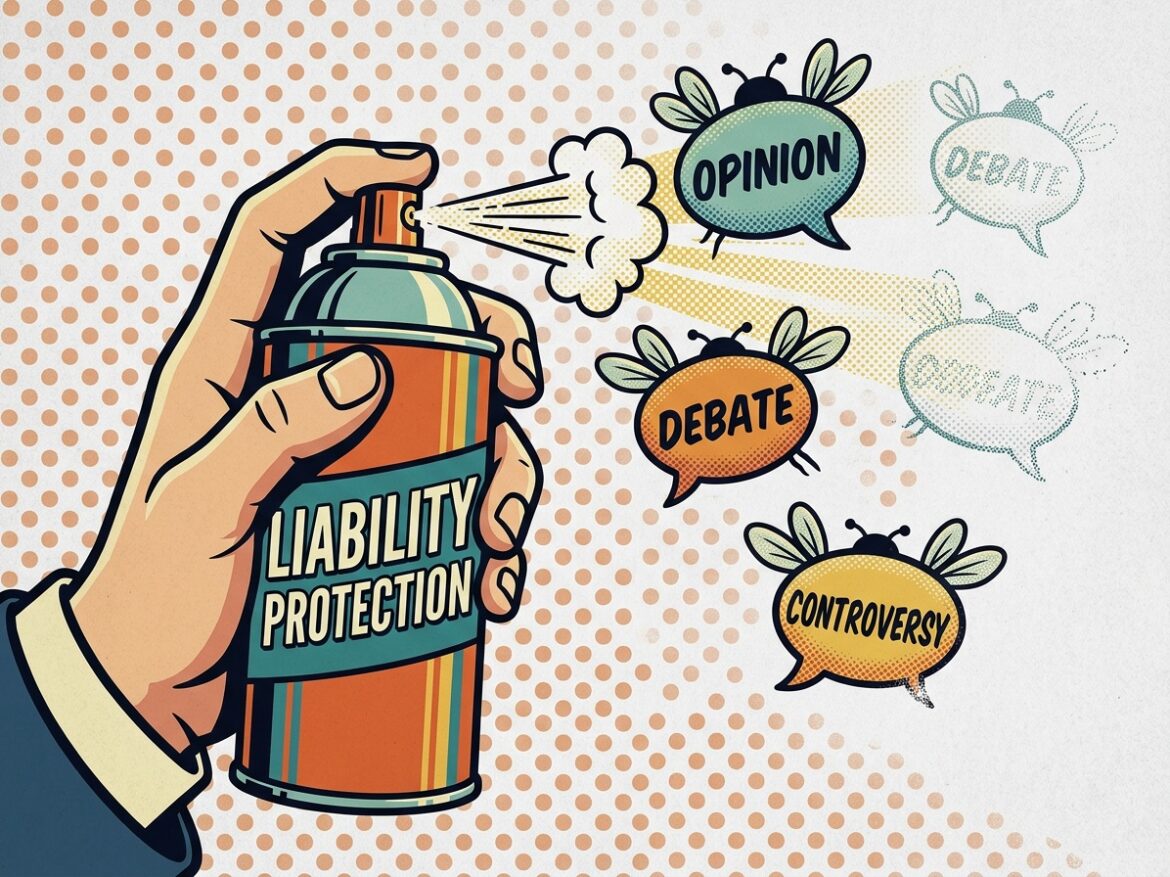

The result is a new equilibrium: liability-driven curation.

Platforms now face pressure from every direction. Moderate too little, and they risk consumer-protection suits. Build engaging features, and they invite claims of addiction. Resist government pressure, and they may trigger investigations or regulatory backlash.

Under those conditions, the safe path narrows quickly. Companies will remove more content, limit reach, and steer clear of controversy. They will favor caution over innovation. They will design systems to minimize legal exposure, not to maximize user engagement or expressive diversity.

That shift will not yield a healthier public square. It will produce a quieter one—less dynamic, less open, and less resilient. When the cost of hosting speech rises high enough, platforms host less of it.

The internet did not become a central forum for public discourse by accident. It developed under legal rules that allowed experimentation, risk-taking, and a wide range of voices. Courts, litigants, and government actors now place those rules under sustained pressure.

If this moment marks a turning point, the lesson is straightforward. When the law treats speech as a liability to manage rather than a value to protect, it does not improve speech. It reduces it.