Washington has a choice: let AI policy fragment into 50 competing regimes, or set a clear federal baseline that keeps innovation moving. The Trump administration’s new artificial intelligence (AI) legislative framework stakes out the latter path—but leaves important gaps.

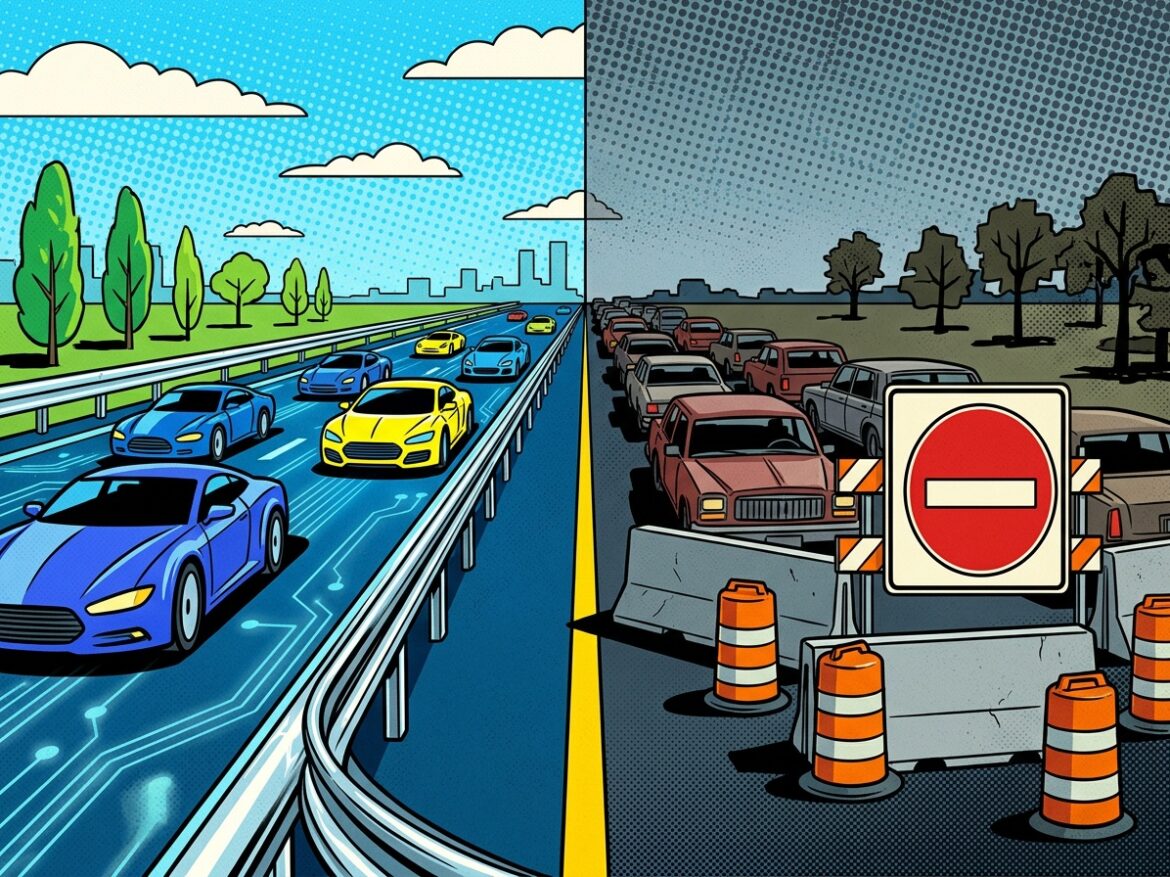

The framework sketches broad principles to guide federal policymaking on a technology at risk of a balkanized patchwork of state-level rules. Left unchecked, those rules could curb development, competition, and innovation by layering cumulative compliance burdens on businesses.

But the framework itself is thin on details, and Congress may never translate it fully into law. Still, as Kristian Stout notes elsewhere in these pages, it commendably advances a “light-touch federal approach, grounded in existing legal doctrines, and focused on harms rather than speculative risks,” while avoiding premature or overly centralized interventions.

Even so, the framework leaves out several policies and ideas policymakers should consider. Without them, regulatory guardrails for responsible AI development and deployment could end up constraining American competitiveness, innovation, and the economic opportunities that follow.

Threading the Federalism Needle

The framework preserves a role for states. They retain authority over how AI infrastructure—such as data centers and utility poles—gets deployed within their borders, and they can continue to use their police powers to prosecute criminal conduct facilitated by AI technologies.

At the same time, the framework aims to preempt a growing patchwork of state-level AI regulations that constrain nationwide development and innovation. Companies operating across jurisdictions must otherwise navigate disparate, overlapping, and often inconsistent rules. Those requirements raise the cost of doing business and divert resources away from improving products and tools.

The framework also calls for clear standards that avoid ambiguous interpretations, which can invite excessive and costly litigation—particularly in areas like child protection. In copyright law, it takes a generally permissive approach to using content to train AI models, while leaving specific questions—such as whether the scope of the “fair use” exception covers AI training—to courts.

Courts remain well-suited to that task. They tend to account for commercial realities and have repeatedly shown an ability to apply general principles and extensive precedent to new technologies and market developments—creatively, but pragmatically. They also face less risk of regulatory capture or political bias than legislatures or regulatory agencies.

In the end, the framework tries to balance community concerns about emerging harms with skepticism of regulatory overreach, and federalism and local autonomy with the Constitution’s protection of interstate commerce. That balance matters for cost-efficient development and deployment.

Consistent with these principles—and with the goal of holding bad actors accountable without undermining innovation and competition—policymakers should, however, also consider several other areas of emerging concern.

Consent Fatigue Meets Compliance Costs

Conspicuously absent from the framework is any mention of a federal data-privacy standard. That omission matters. State privacy laws vary widely, and those differences shape companies’ ability to access depersonalized data needed to train AI models, improve accuracy, and serve users effectively. Without representative data, model performance suffers, and existing biases can worsen—undermining tools such as fraud detection, tailored recommendations, and advanced analytics.

More than 20 states have enacted comprehensive data-privacy standards, creating a fragmented regulatory landscape. That patchwork raises compliance costs and complexity for businesses. It also hits “little tech” hardest, as smaller firms lack the scale to spread fixed regulatory costs. As Jennifer Huddleston of the Cato Institute observes:

Because [different state privacy] laws have different models, businesses may not merely be able to comply with the most restrictive one. Instead, they will likely incur additional costs and require additional time for each law rather than developing the best privacy and security options for their product’s intended audience more generally.

These barriers to competition grow when privacy regimes become especially burdensome. One 2022 study found 33% fewer new apps entered the European mobile app market than expected following adoption of the European Union’s General Data Protection Regulation (GDPR). The California Consumer Privacy Act (CCPA) alone imposed an estimated $55 billion in compliance costs, affecting between 15,643 and 570,066 businesses.

Privacy rules can also degrade the user experience. Consent pop-ups introduce friction, increasing the time and effort required to navigate digital services, without delivering commensurate benefits. Studies show users routinely dismiss these interruptions, signaling a preference for streamlined experiences over additional, low-value choices. That behavior has downstream effects: fewer users consent to depersonalized data collection for AI training, further limiting data quality.

The consequences fall unevenly. When friction drives users away from major platforms, smaller businesses suffer most—especially those that rely on platform access, lack brand recognition, or depend on data-driven tools to reach customers. The same dynamic affects firms increasingly reliant on AI-enabled tools, whether integrated into platforms or deployed independently.

The framework’s preference for federal preemption of state AI rules points in a clear direction: Congress should adopt a federal privacy standard that similarly preempts state laws. Done right, such a standard could balance user autonomy and informed consent with the need for access to depersonalized data—without imposing the kind of excessive costs that only large incumbents can absorb.

Recent legislative efforts suggest a less promising path. Under the Biden administration, Congress considered the American Data Privacy Protection Act (ADPPA), which did not preempt state laws and took a more heavy-handed approach. The bill would have required firms holding large volumes of data to submit impact assessments to the Federal Trade Commission (FTC) for nearly all algorithmic activities, increasing administrative costs and uncertainty. Regulators could have rejected those submissions as insufficiently detailed, exposing even good-faith actors to significant litigation risk.

The ADPPA ultimately failed to advance. But without a workable federal standard, future proposals may revive similarly burdensome approaches—raising the stakes for getting privacy policy right now.

The Case for Letting Employers Do the Training

The framework also favors “non-regulatory methods to ensure that existing education programs and workforce training and support programs, including apprenticeships, affirmatively incorporate AI training.” That likely reflects the administration’s broader AI action plan, which urged the U.S. Treasury Department to clarify that AI literacy and skills programs qualify as “educational assistance” under Section 132 of the Internal Revenue Code. That change would allow employers to offer tax-free reimbursement for AI-related training.

Additional tax reforms could go further. My Mercatus Center colleague Revana Sharfuddin has argued for policies such as full business expensing to encourage employer investment in AI-related workforce training. Employers increasingly report that many college graduates lack the skills to use AI tools effectively—tools that now play a central role across industries. As a result, firms must shoulder more of the burden to train and upskill their workforce.

Full expensing would change those incentives. If employers could fully deduct the cost of training programs, they would have stronger reason to invest in workforce development. That approach would also reduce pressure for government-run training initiatives, avoiding the need for additional federal overlays.

It could also help workers adapt to technological change. As AI reshapes the labor market through automation, expanded access to training can prepare workers for new—and potentially more lucrative—roles, including entrepreneurial opportunities.

Build the Pipes Before Regulating the Flow

In the same vein, policymakers should extend full business expensing to a broader range of AI-enabling infrastructure. That includes utility poles for broadband deployment, which would advance the goals of the $42.5 billion Broadband Equity, Access and Deployment (BEAD) program. BEAD funds states and localities to expand high-speed internet access, largely through private-sector partnerships.

The AI implications are substantial. Many rural and regional communities still lack reliable high-speed internet, which underpins applications such as industrial automation, advanced health care, and remote education. For latency-sensitive uses—such as autonomous vehicles—even fractions-of-a-second delays can carry serious consequences.

BEAD funding should also come with conditions that encourage states and localities to reduce unnecessary regulatory barriers to infrastructure deployment, particularly where the costs outweigh the benefits. Critics may argue that such conditions infringe on state autonomy, especially given the framework’s emphasis on federalism in areas like zoning. But conditions on federal grants do not prevent states from spending their own funds as they see fit. They simply aim to ensure federal taxpayer dollars deliver maximum value.

Tax policy can also support AI infrastructure more directly. Expanding deductions for data center investment would complement state and local efforts, which increasingly rely on tax incentives to attract these facilities. Data centers are essential for AI and cloud computing, both of which demand ever-greater computational capacity. They also require significant capital and operating expenditures.

With that said, data center development remains politically contentious, particularly due to concerns about energy use and resource demands. The framework addresses these concerns by emphasizing that new construction should not raise local electricity costs, consistent with the Ratepayer Protection Pledge that several companies have adopted. In practice, data centers can also reduce costs—for example, by investing in grid infrastructure, supporting new energy generation, or absorbing excess capacity.

Finally, the framework calls for “streamlin[ing] federal permitting for AI infrastructure construction and operation.” That goal is important, but it does not go far enough. Policymakers should improve coordination across federal, state, and local permitting regimes, as well as among the agencies involved. The same applies to environmental reviews.

Duplicative processes based on inconsistent standards do more than increase costs. They inject uncertainty into project timelines and final expenses—exactly the kind of friction that slows infrastructure deployment and, in turn, AI development.

Fishing Expeditions Won’t Build AI

Recent analysis cited by the Computer & Communications Industry Association (CCIA) estimates that “AI-related products and improvements will contribute $15.7 trillion to the global economy by 2030, including $3.7 trillion to the U.S. economy (14.5% of total estimated GDP).” Those gains could be undermined by costly, ill-advised antitrust litigation and “fishing expedition” investigations driven more by speculative theories of monopolization than by evidence of actual competitive harm.

Concerns raised by U.S. and international competition enforcers about concentration across the AI stack—from data to cloud computing—often overstate the risks. Our research finds these markets remain dynamic and competitive. Partnerships between AI startups and large technology firms illustrate the point. Startups bring ideas and talent; incumbents bring capital, infrastructure, and access to millions of users. These arrangements lower entry barriers, extend the benefits of network effects and scale, and ultimately serve consumers, innovation, and competition.

By contrast, the specter of preemptive antitrust intervention—aimed at “correcting” markets before they supposedly tip—creates real costs. It injects uncertainty into capital-intensive AI investment decisions and diverts resources away from developing and refining new technologies.

That does not mean enforcers should stand down. Agencies such as the FTC and the U.S. Justice Department (DOJ) should continue to monitor AI markets and conduct targeted studies. But enforcement should rest on concrete evidence of practices that materially harm competition—such as conduct that excludes rivals without offsetting consumer benefits—not on abstract theories of future harm or incomplete understandings of evolving technologies.

A more disciplined approach would also encourage firms to experiment and compete, confident that novel business practices will not trigger enforcement based on conjecture, rather than evidence.

Rules That Don’t Break the Engine

The national AI framework marks a step in the right direction. Policymakers should treat it as a foundation for future legislation—not a final word. It outlines broad principles, not prescriptive rules, and largely embraces a “permissionless innovation” approach that targets concrete harms, while remaining wary of regulatory overreach.

Getting the next steps right will require more than siloed expertise. Policymakers must understand not only the distinct issues at each layer of the AI tech stack, but also how those layers interact. Only then can they weigh the benefits of new rules against their likely costs.

Done well, the resulting framework can give businesses the certainty they need while mitigating real harms. It can also preserve the conditions for dynamic competition and continued innovation—unlocking tools with the potential to expand educational, economic, and health-care opportunities for hundreds of millions of Americans.